As all misguidedly-lazy programmers are wont to do, I decided that it would be easier to ‘enhance’ CouchDB to meet my needs rather than to rewrite visotank to use SQLAlchemy. Also, I wanted to understand what CouchDB was doing under the hood with views and try my hand at some Erlang.

CouchDB as currently implemented maintains a lot of information for each mapped document. There is a B-tree associated with each View Group whose keys are Document Ids and whose Values are a list of {View Id, Actual-Key-You-Mapped-In-That-View} tuples for every key mapped from that document for every view in the view group. Next, each View has a B-tree associated with it whose keys are {Actual-Key-You-Mapped, Document Id} tuples and whose values are the Actual-Value-You-Mapped.

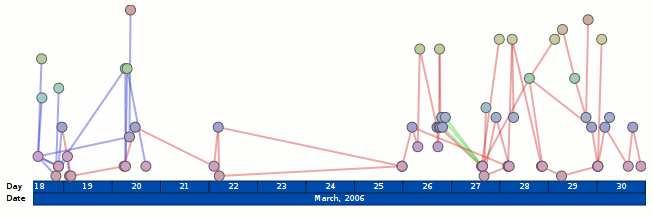

This is all well and good, but is a poor fit for one of my key use-cases: reducing e-mail message traffic to date-binned summary statistics so I can render graphics. If I want the weekly-messages-sent count for a given ‘author’, map(message.author, blah) will allow me to filter only to messages sent by that author, but no matter what blah is, I will still get one per message.

Long blog post short, I have implemented a hackish first-pass reduce/accumulate solution to my problem. The idea is that ‘stews’ allow you to aggregate mapped data that shares the same key. I’m a little fuzzy on exactly what the definition of ‘reduce’ is in the map/reduce papers (it’s been a while, if ever), so we’ll call this ‘accumulate’ (in the SICP/Scheme sense). It is a hack because:

- It does not unify views and ‘stews’. Whereas views are defined under ‘_design’ and accessed via ‘_view’, stews are defined under ‘_pot’ and accessed via ‘_stew’.

- Values can only be integers right now, and it’s assumed you want to add them. (No custom JavaScript logic!)

- I have not yet dealt with modified/removed documents. Which is to say that if you modify or remove a stew-mapped document, your accumulated values will climb ever-skyward.

- It is in no way, shape, or form intended to be anything other than a learning experiment. (It is my hope that Damien Katz magically solves my problems in the next release. Having said that, I’m not opposed to trying to actually implement a more solid feature along these lines; coding in Erlang is wicked awesome. (sounds better with a fake accent))

It just so happens that these constraints are perfectly in line with visotank’s needs. Using stews and otherwise limiting my use of views, CouchDB is less ridiculous in its view-update times and the fully-populated (view/stew-wise) from-scratch ‘messages’ database tops out at 77M rather than 1.2G.

Anyways, if anyone is interested in the code (or the comments I added to the existing couch_view_group.erl logic), my bzr branch for CouchDB is at: http://www.visophyte.org/rev_control/bzr/couchdb/visbrero-couchdb/ . My bzr branch for couchdb-python, adding a simple unit test for stews is at: http://www.visophyte.org/rev_control/bzr/couchdb-python/visbrero/ .

Update! The bzr repository is powerful messed up, so a better choice might be my changes in patch form: http://www.visophyte.org/rev_control/patches/couchdb/visbrero-couchdb-stews-1.patch

Update 2! The bzr repository accessible at http://clicky.visophyte.org/rev_control/bzr/couchdb/visbrero-couchdb/ works and there’s a checkout with working copy (that you can browse) at http://clicky.visophyte.org/rev_control/bzr-checkouts/couchdb/visbrero-couchdb/ . Note that these locations are not guaranteed to be valid for all time, but will be good for at least a month or two.

I fear my (sleepy) explanation may not be sufficient, so the unit test I added to couchdb-python may speak better to this end:

self.db['tom1'] = {'author': 'tom', 'subject': 'cheese'}

self.db['tom2'] = {'author': 'tom', 'subject': 'cats'}

self.db['tom3'] = {'author': 'tom', 'subject': 'mice'}

self.db['bob1'] = {'author': 'bob', 'subject': 'hats'}

self.db['jon1'] = {'author': 'jon', 'subject': 'hats'}

self.db['kim1'] = {'author': 'kim', 'subject': 'cats'}

self.db['kim2'] = {'author': 'kim', 'subject': 'cows'}

self.db['_pot/test'] = {'views': {

'authors': 'function(doc) { map(doc.author, 1) }',

'subjects': 'function(doc) { map(doc.subject, 1) }'

}}

authors = dict([(row.key, row.value) for row in self.db.view('_stew/test/authors')])

self.assertEqual(authors['tom'], 3)

self.assertEqual(authors['bob'], 1)

self.assertEqual(authors['jon'], 1)

self.assertEqual(authors['kim'], 2)

subjects = dict([(row.key, row.value) for row in self.db.view('_stew/test/subjects')])

self.assertEqual(subjects['cheese'], 1)

self.assertEqual(subjects['cats'], 2)

self.assertEqual(subjects['mice'], 1)

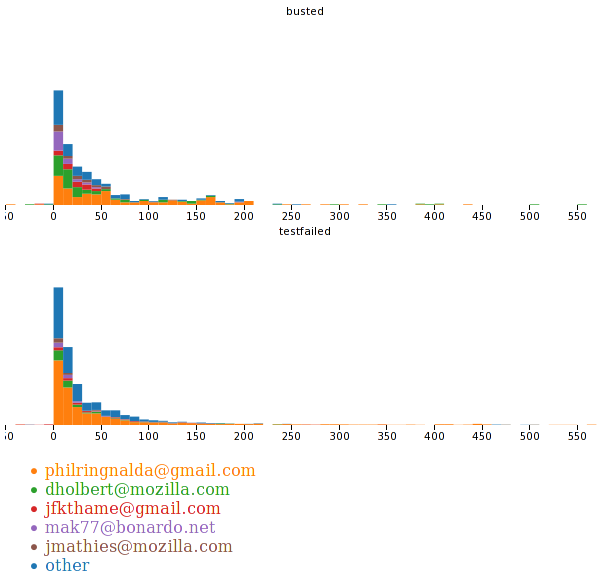

Uh, the spiral visualizations have nothing to do with the post. They are new insofar as I have never posted them before, but they are in fact rather quite old. They have a new aspect in that they now work with the cairo renderer, having relied upon ‘special’ (horrible) custom renderers in the old agg backend.